Becoming a barefaced liar in reverse detective adventure Who's Lila?

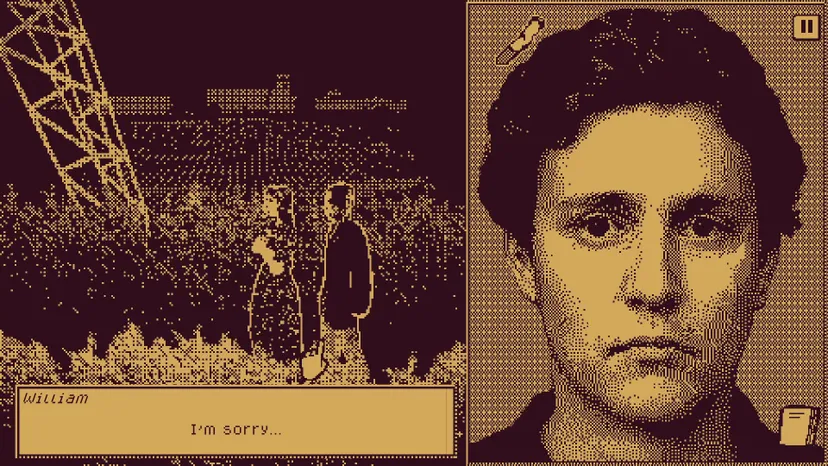

In Who’s Lila? a woman has gone missing, and Will was the last person to see her alive. Except Will only communicates using his facial expressions, so the player must tug, stretch, and shape his face, Mario 64-style, in order to communicate what they want to say as they navigate these mysterious events.

Game Developer spoke with Garage Heathen, developer of the eerie detective game, to talk about what drew them to use facial expressions to communicate throughout the game, how they taught the game to recognize so many faces, and the challenges that came from working with this kind of narrative system.

Game Developer: Who’s Lila? sees players carrying on a conversation in a mystery game without saying a word. What interested you in this idea? What inspired it?

Heathen: So, the face-shifting mechanic was inspired by a mixture of things. I guess the main one was L. A. Noire with its hyper-realistic yet often uncanny facial expressions. I thought it would be fun to invert that mechanic and instead of figuring out potential liars, try the role of being liar yourself.

The second one was that one YouTube series about interrogations from JCS criminal psychology. A variety of reactions and characters they interrogated inspired the many routes of the game (and, particularly, the interrogation scene).

As for carrying out conversations without saying a word, early concepts of the game included some traditional dialogue choices (so you would have been able to choose what to say). However, I decided against that since, in my view, it would have diluted the main gameplay loop too much.

Players reply to questioning and other characters through manipulating a face. How did you design this face-manipulation mechanic? What thoughts went into making it work?

From the get-go in creating the game, I just figured it had to be a 2D face with parts that could be dragged, a split-screen, and a gritty, pixelated 1-bit look. A 2D face was easier to implement than a 3D model (a short time prior, Unity released a system for 2D skeleton animation) and, as a bonus, turned out to look really uncanny. I basically used the existing skeleton animation system and added the functionality to drag animation bones with the cursor.

Likewise, how did you get the game to recognize certain facial expressions as specific responses and tones?

This one was tricky, but thanks to luck and the advice of @ibedenaux I quickly decided to use a neural network solution. I used Emgu CV for Unity.

In simple terms, a neural network would train on large amounts of data (snapshots of Will’s face showing different emotions). Each emotion was labeled, but the model wouldn’t know the label. The NN would make a guess and then adjust itself based on the errors it made. Assembling the actual dataset was relatively easy. Since we only have one face to work with, there was no need for large sets of pictures of different people’s faces. Me and some of my friends and helpers would make all sorts of grimaces by hand and then add them to the emotion library. Had to make a separate app for that.

One setback of this system: although it’s much quicker to train, it won’t work with other faces, so no modding (yet).

Did any challenges, funny moments, or interesting development stories come out of getting these mechanics to work properly?

So, considering the nature of the mechanic and the freedom of making all sorts of wild faces – yeah, it was full of funny stuff. An assortment of guessing errors in the first stages of development, surprising judgements that the NN made for some of the weirder faces people made – lots of wacky stuff.

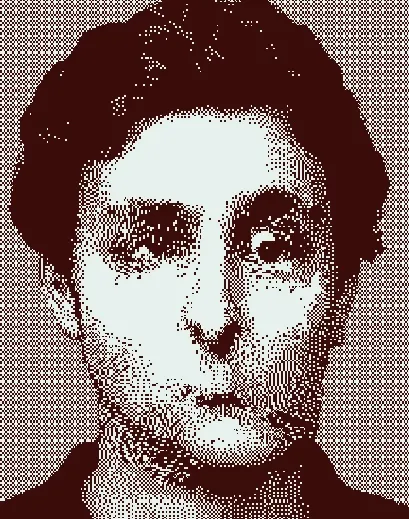

Testing from other people helped a lot. For example, there was a period in the development when the NN thought that any face with raised eye brows meant sadness, disregarding the mouth altogether. I wasn’t even aware of that since, in the training data, I never included the raised eyebrows for anything but the sad face.

(At some point it thought this was a smile…)

What thoughts went into creating a mystery narrative where the player could respond by making faces? Did this mechanic shape how the story could work in any way? Or was it the other way around?

In real life, the non-verbal communications we have are often much more important than what we actually say. I felt this was a good base for a game mechanic. Interestingly, at first, I was planning to make a much more elaborate system with an “ingenuity” meter and different gradations of each emotion. However, upon trying out the actual facial part dragging, I decided to tone it down a bit. The faces people could make were way too drastic for such fine-tuning and the time limit implemented in the game wouldn’t help. Let’s be real, almost none of the faces you’d make in a realistic playthrough are going to be natural or feel “genuine.”

So, the mechanic and story would alternate in dictating each other. Sometimes a story bit would be inspired by possible facial interactions and sometimes the face-making had to be built around the story. Although, I must say, as ManlyBadassHero mentions, some of the deeper parts of the game feature less of face-making and more of other, more traditional forms of gameplay, like puzzle-solving and stealth-action.

What thoughts went into crafting a story that could go in so many different directions based on the facial expression mechanic? When players could make so many different responses to questions and conversations, how do you keep the story on track? Or how do you make so many different story directions possible?

Well, it wasn’t easy, and writing out all the different paths was probably the most time-consuming part of the development. Most of them I just wrote out individually, but (as you usually do with branching narratives) some branching dialogue parts do lead to the same outcome, loop around, or recycle a neighboring storyline with a different outcome.

As for keeping the story on track, I always treat major dialogues (low-key bosses of the game) as a means of sharing information. So, the more intense the dialogue, the more important the revealed information. The writing process then consists of trying to piece together all the important bits into a coherent non-linear narrative. Of course, some ideas come during writing, so I would spontaneously add a little joke or a whole new branch just from that.

What drew you to the visual style for the game? What do you feel this style added to the mood and feel of the game?

I am enamored by the style of World of Horror and Critters for Sale, so even before I knew what the story was going to be, I’d already decided on the look of the game. Everything else, from mechanics to the story, was dictated by the visual style. I tend to work like that. For me, the look of the game almost always comes first. In Who’s Lila, the shapes are hard to discern and everything feels kind of dreamy and gritty. Another cool thing about the style – the scenes are really quick to assemble.

A lot of things didn’t need any texturing at all and, since the camera angles are fixed, a lot of props could be done just by using flat planes with an image on them.

One of the challenges, of course, was to make the scene more or less readable. Although some things were obscured by design, other had to be easy to recognize. This required a certain amount of work with composition and lighting.

The facial expression mechanic adds a playfulness and unease to the game – William’s faces can be both creepy and hilarious at the same time. How do you feel this affected the mood of the game?

This is a bit difficult to answer, as I somehow overlooked the humorous aspect of face-making at the early stages of development. Who’s Lila? becoming a game with huge meme potential was not something I planned for, actually.

So, when the demo shipped, at first, I was quite worried that possible goofy faces would break the intended atmosphere. Surprisingly, that didn’t happen. Judging from the reviews and comments people made, the faces seem to combine the two qualities – being funny and uncanny at the same time, which I see as a success!